One very encouraging sign of health in the Agendashift community is the way things are beginning to happen without my personal involvement. It really is getting a life of its own! For example, our Slack has spawned a #bookclub channel for coordinating a weekly get-together, and partners have been meeting to share and experiment with different ways to debrief their Agendashift surveys.

In the latter case, some of that learning has been incorporated into our survey reporting tool, the ‘unbenchmarking’ report. Here are three demos, using an illustrative subset of data from the 2017-18 global survey (which is one way to try a mini assessment for yourself), and a new feature that makes it easier for facilitators to choose which report sections to share and in what order.

Demo 1: The classic report

First off, here’s what could be described as the ‘classic’ unbenchmarking report, with sections pretty much in the order described in chapter 2 of the book, plus two sections (Above profile and Below profile) that rely on some machine learning functionality that was beyond the scope of the book:

Tip: Page through the several sections of the report using the PgUp and PgDn buttons (see the navigation control top right) or the corresponding buttons on your keyboard or presentation clicker.

Demo 2: The minimum viable debrief

If you’ve read the book, you’ll know that I encourage facilitators to move quickly over the early sections of the report, leaving plenty of time for the two sections that identify (respectively) areas of agreement and disagreement. Partner Olivier My takes that advice and turns the dial up to 11 with his ‘minimum viable’ debrief:

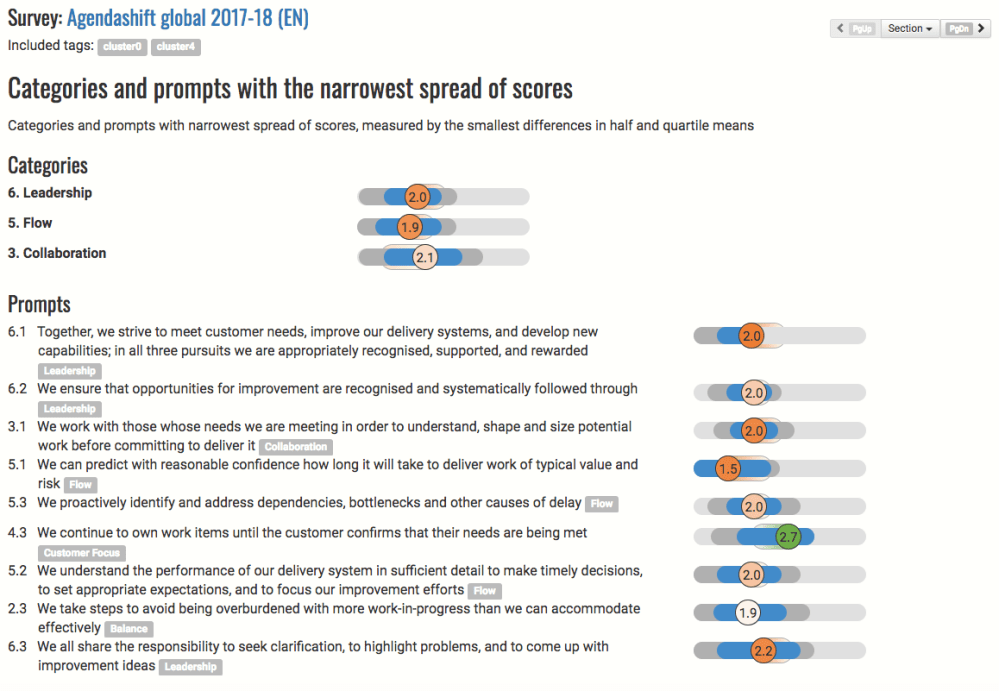

Here we’ve dispensed even with the cover page, going straight to the categories and prompts with the narrowest spread of scores, ie areas of agreement:

Interestingly, we seem to be in agreement mainly on weaknesses! There’s a good chance that there are some quick wins here.

Now that we’re comfortable with the data, let’s go to the second page of Olivier’s report:

Very different! The issue here isn’t obvious weakness, it’s inconsistency – whether that’s of actual behaviour and outcomes or of perception. Some typical questions for the facilitator to ask here:

- “Who scored a 3 or 4 for this one and wouldn’t mind sharing their thinking?”

- “Who can imagine why someone might score a 3 or a 4 here?” (a safer version of the previous question)

- “Who has good examples of this working well?”

- “Who’s different to that? Who had a 1 or a 2?”

- “What might explain the 1’s and 2’s?” (again, the safer version of the previous question)

- “What’s the impact when it’s not working well?”

Demo 3: The compact debrief

Steven Mackenzie uses a debrief structure very different to mine, narrowest and widest alternating with strongest and weakest:

- Score distributions

- Categories

- Categories and prompts with the narrowest spread of scores

- Strongest categories and prompts

- Categories and prompts with the widest spread of scores

- Weakest categories and prompts

(Full report beginning at the Contents page here)

It took me a while to get this but I’m keen now to try it for myself at my next available opportunity. Here’s how Steven explains it:

- Categories as bar charts – Easy introduction to the spread of data

- Categories as sliders – I explain the slider visualisation of the same data, helping people understand the slides to come

- Areas of strongest agreement – I expect the group to recognize these behaviours, and be mentally comfortable to accept what follows

- Highest scores – I will congratulate on some specifics here, offer them option to talk if they are passionate, but warn that we’re seeing variation in responses creeping in

- Widest variation: I will ask for discussion here

- Weakest scores: I will ask for discussion here

So there you go: community learning captured in the technology!

Experience it first hand

Of my upcoming public workshops, that “next available opportunity” is the 1-day workshop on April 6th in Raleigh:

- 6 April, Raleigh, NC, USA – Mike Burrows, Kert Peterson

- 14-15 May, Munich, Germany – Mike Burrows, Mike Leber

- 22-23 May, Cardiff, UK – Mike Burrows

The survey debrief is the catalyst for a generative process in which we generate the outcomes that represent action areas, themes, goals and ambitions for the organisation’s transformation. This is session 2 of a 4 or 5-session public workshop, and easily a workshop in its own right if you’re doing it privately with a client or your employer.

As already mentioned, you can try the mini assessment by participating in the 2017-18 global survey; there’s also a free trial, allowing you to survey small teams. Full partner status gets you a range of full-sized templates, all our workshop materials, and (under your control) a listing in our partner directory.

Blog: Monthly roundups | Classic posts

Blog: Monthly roundups | Classic postsLinks: Home | About | Partners | Resources | Contact | Mike

Community: Slack | LinkedIn group | Twitter