In the weeks leading to Agendashift’s launch earlier this month, a number of things came together at just the right time. Two of those in combination – Clean Language and Cynefin – I blogged about recently. Another key element, the pathway, I have hinted at but not described adequately. It’s time to put that right.

Some background

The online part of Agendashift is built around the values-based delivery assessment, our distinctively non-prescriptive, non-judgemental, methodology-neutral tool. The 2014 version of this was extracted from my book (and not just by me – others had the same idea), and it has been revised beyond all recognition since then, first in close collaboration with Dragan Jojic and more recently by community work, mainly via our Slack group.

The assessment’s tone has changed noticeably over that two-year period. It is of course still organised by those six values – transparency, balance, collaboration, customer focus, flow, and leadership – but the 40+ prompts are much less about specific practices and much more about the outcomes derivable from them. That might seem like an odd change to make, but we find that people buy into those outcomes even when the by-the-book practices that lead to them can seem unattractive.

For example, who wouldn’t want to be able to say this about their organisation:

We understand our performance sufficiently to make timely decisions, to set appropriate expectations, and to guide focussed improvement

Buy into that outcome, and implicitly you’re buying into the changes that will bring it about. Compare that to the older version of this prompt:

We measure lead times and predictability and seek to improve them

With the benefit of hindsight I can sympathise with those that instead of buy-in reacted with push-back! The original wording was well-meant but ill-thought, almost guaranteed to provoke resistance in anyone who has experienced the mis-application of metrics or who wonders why we seem to promote one set of metrics at the apparent exclusion of others.

Outcome, agreement, action, planning

Much of coaching or facilitating with Agendashift can briefly be summarised as follows:

- Using the prompts to establish a shared understanding of the current situation, expressed in terms of how well the prompts describe reality (‘scoring’ the prompts)

- Using the prompts some sense of what’s important to people – first individually (‘starring’ selected prompts) and then collectively

- Prioritising those generic outcomes, then turning them into agreement on more specific outcomes that are more immediately realisable

- Generating, framing, developing, and organising actions that we hope will make the most important outcomes a closer reality

This is already powerful, but it’s not quite enough. We need pathways, shorthand for a process that turns something that could feel very multidimensional and amorphous into something sequential that can be tackled step-by-step over a period of time. It turns “a lot of good stuff” into a plan (where that’s warranted) or an agreed way forward (if that’s all that’s needed).

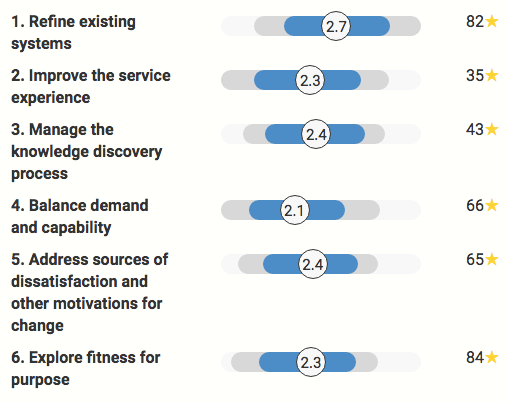

Agendashift’s latest trick is the ability to reorganise the values-based assessment on demand. Here’s a summary chart that illustrates the idea, using the ‘Pathway edition’ template:

Do the category names have a familiar ring? They’re inspired by Reverse STATIK, the ‘Reverse’ a clue that this is not the classic STATIK process that builds up to a Kanban system implementation, but a process of review and reworking that starts with our existing management systems, whatever they are.

In our example, the first category, Refine existing systems, already scores relatively well (or rather, its prompts do), but look at the number of stars! This category contains some of the most basic things to get right, and it would seem that there is strong support for making further improvement here before moving on to more challenging things.

Improve the service experience isn’t as well supported, but there’s a wide range of scores here. Before dismissing this category prematurely it would be wise to explore any differences of opinion. What’s going on here? Differences of understanding of the current situation, of what’s possible, or of the scope of the exercise? Well worth checking.

The next three categories, Manage the knowledge discovery process, Balance demand and capacity, and (deep breath) Address sources of dissatisfaction and other motivations for change have moderate levels of support. Sequence-wise, they’re in at least roughly the right order.

The last category, Explore fitness for purpose, has the strongest support, but are we ready to tackle it yet? Would it make sense to tackle the basics before we address things that we know will be more challenging – organisation design, leadership behaviours and the like? There’s no one right answer to that question, but it’s worth asking!

It should be clear now that pathways here aren’t cookie-cutter plans, but starting points to be developed collaboratively. They can be used in conjunction with other tools (story maps and impact maps, for example). They can be cross-checked with other models (the Agendashift transformation strategy model or your favourite Agile implementation roadmap, for example), to find any gaps and help create a more robust plan. Alternatively they can form the basis of a simple working agreement, for example to revisit each category over the course of the next six retrospectives – a 10-minute conversation for 12 weeks of impact!

You can do this too

Are you in the business of Lean-Agile transformation – a coach, consultant, or manager perhaps? Join our partner programme and you’ll have all these tools at your fingertips. Or you can use the services of one of our growing band of awesome partners, people who know when to put prescription aside, to start listening, and to facilitate rather than impose a process of transformation, a process that takes to you towards the outcomes you want.

Blog: Monthly roundups | Classic posts

Links: Home | Partner programme | Resources | Contact | Mike

Community: Slack | LinkedIn group | Twitter